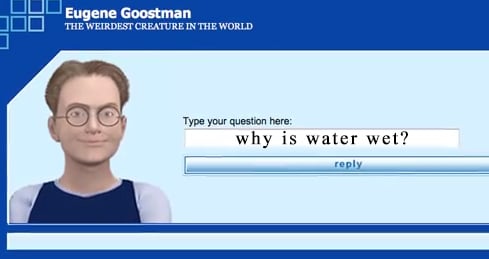

Eugene The Chatbot Did not Pass the Turing Test

Over the past weekend many “credible” media outlets falsely reported that a “super computer” had convinced about a 30 percent of judges that it was a 13-year-old boy and hence passing the elusive Turing test. Over the past few days several experts quickly reported the findings as false. University of Reading sponsored an event. A computer … Read more